Performance

In this section, we go over some real world performance numbers for Hudi upserts, incremental pull and compare them against the conventional alternatives for achieving these tasks.

Upserts

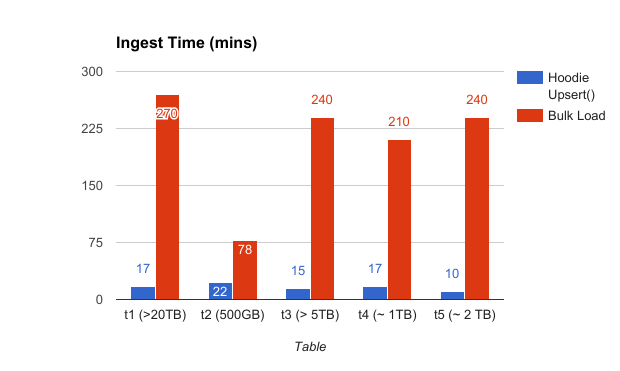

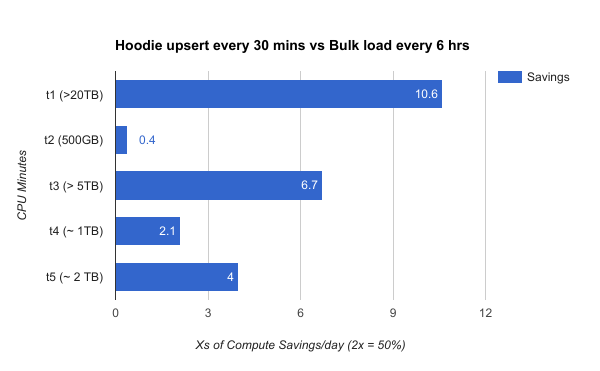

Following shows the speed up obtained for NoSQL database ingestion, from incrementally upserting on a Hudi dataset on the copy-on-write storage, on 5 tables ranging from small to huge (as opposed to bulk loading the tables)

Given Hudi can build the dataset incrementally, it opens doors for also scheduling ingesting more frequently thus reducing latency, with significant savings on the overall compute cost.

Hudi upserts have been stress tested upto 4TB in a single commit across the t1 table. See here for some tuning tips.

Indexing

In order to efficiently upsert data, Hudi needs to classify records in a write batch into inserts & updates (tagged with the file group it belongs to). In order to speed this operation, Hudi employs a pluggable index mechanism that stores a mapping between recordKey and the file group id it belongs to. By default, Hudi uses a built in index that uses file ranges and bloom filters to accomplish this, with upto 10x speed up over a spark join to do the same.

Hudi provides best indexing performance when you model the recordKey to be monotonically increasing (e.g timestamp prefix), leading to range pruning filtering out a lot of files for comparison. Even for UUID based keys, there are known techniques to achieve this. For e.g , with 100M timestamp prefixed keys (5% updates, 95% inserts) on a event table with 80B keys/3 partitions/11416 files/10TB data, Hudi index achieves a ~7X (2880 secs vs 440 secs) speed up over vanilla spark join. Even for a challenging workload like an '100% update' database ingestion workload spanning 3.25B UUID keys/30 partitions/6180 files using 300 cores, Hudi indexing offers a 80-100% speedup.

Read Optimized Queries

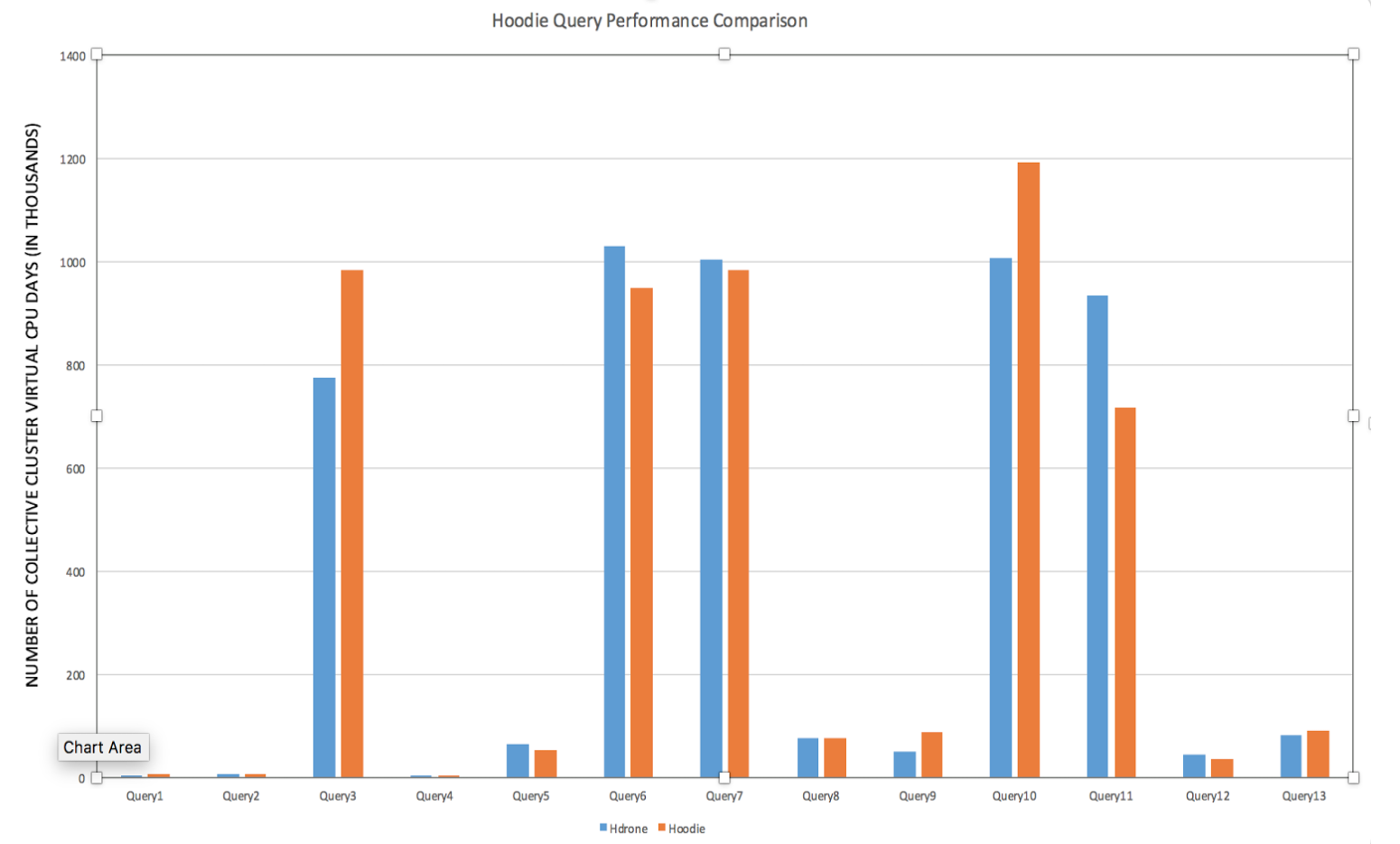

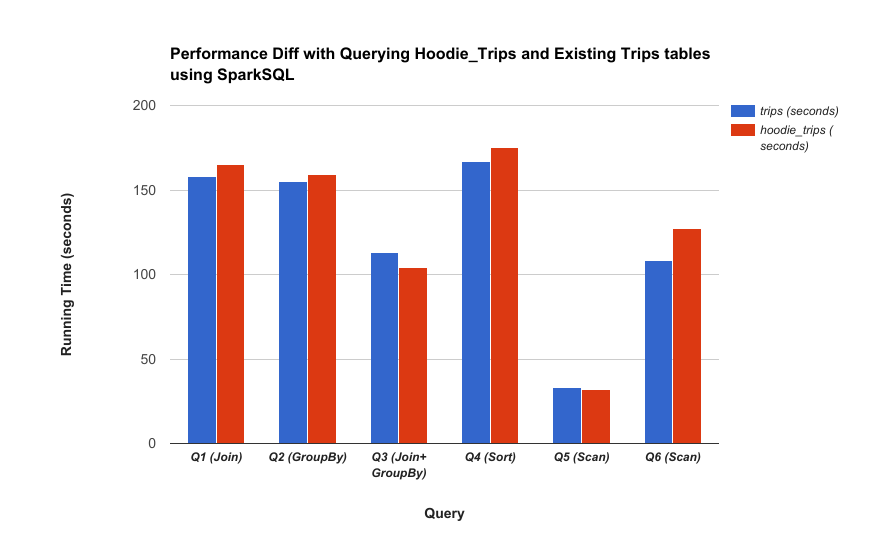

The major design goal for read optimized view is to achieve the latency reduction & efficiency gains in previous section, with no impact on queries. Following charts compare the Hudi vs non-Hudi datasets across Hive/Presto/Spark queries and demonstrate this.

Hive

Spark

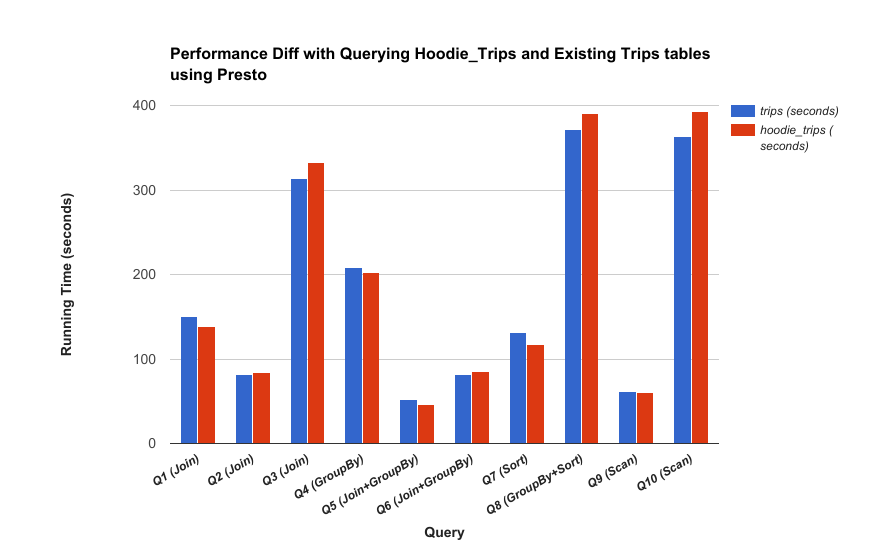

Presto